onionoo MCP: a query service for Tor relays¶

Onionoo is the Tor Project's metadata API for Tor relays and bridges. Anyone can query it over HTTP for the current state of the network: fingerprints, IPs, country and ASN, consensus flags (Guard, Exit, HSDir, and so on), bandwidth history, and uptime time series. The Tor Project's own Relay Search and most third-party Tor dashboards are powered by it.

onionoo-fastapi is a community-hosted wrapper around Onionoo. It exposes the same data through two interfaces that are easier for tooling and AI agents to consume:

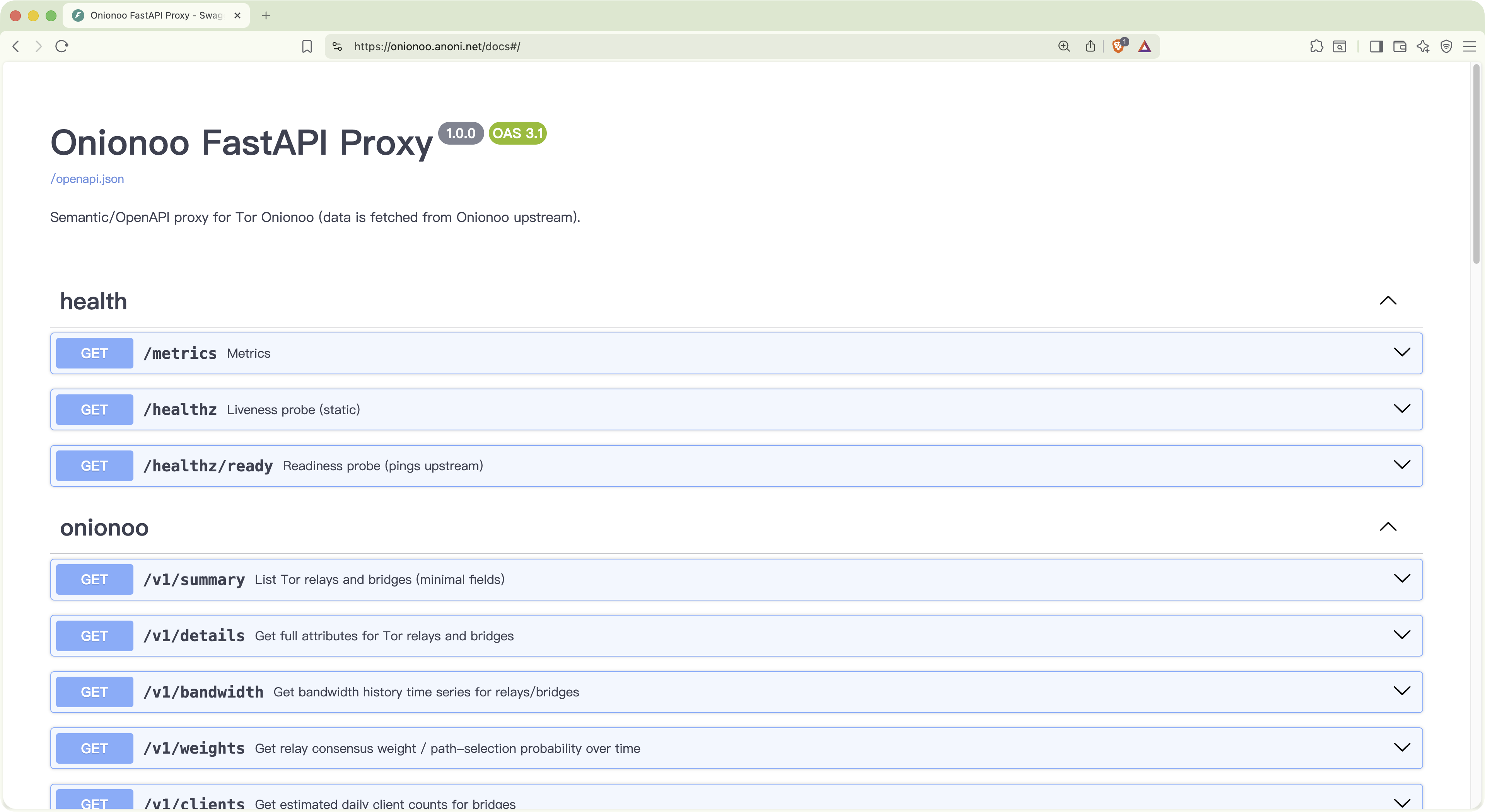

- Semantic HTTP API with OpenAPI / Swagger. Onionoo's compact field names (

n,f,a,r, and friends) are remapped to readable ones (nickname,fingerprint,addresses,running), and the whole surface is described with a real OpenAPI document. - Model Context Protocol (MCP) server. Claude Desktop, Cursor, Claude Code, and other MCP clients can query relays through tool calls instead of hand-rolling HTTP requests.

The service does not store any Onionoo data. It only forwards requests and reshapes responses. The upstream is https://onionoo.torproject.org.

- Hosted instance: https://onionoo.anoni.net

- Swagger UI: https://onionoo.anoni.net/docs

- MCP endpoint:

https://onionoo.anoni.net/mcp(Streamable HTTP) - Source code: https://github.com/anoni-net/onionoo-fastapi (MIT, currently v1.0.0)

onionoo.anoni.net/docs. Every /v1/* endpoint has a full schema and a Try-it-out button for ad-hoc testing.API and MCP: a primer¶

API: a data interface for programs¶

An API (Application Programming Interface) is a standard way for programs to query data from each other. Onionoo itself is an API: you send an HTTP request (for example, "give me every running relay in Taiwan") and it returns JSON.

A few things to note about querying an API directly:

- The specification is written for engineers — you need to know which endpoints exist, what parameters each takes, and what fields come back.

- The answer is raw data. A typical Onionoo response is hundreds of relays' worth of detail; turning that into a trend or a conclusion means more code.

- It works best when you already know exactly what to ask.

MCP: an interface layer for AI tools¶

MCP (Model Context Protocol) is an open protocol Anthropic introduced in 2024. It defines a standard format for how AI models invoke external tools:

- For an AI client (Claude Desktop, Cursor, Claude Code, and others), MCP turns external services into "a list of tools plus a call format" that the model can read, decide when to use, and pick from on its own.

- For a service provider, wrapping an existing API as an MCP server means every MCP-capable AI client can connect to it directly — no need to redo the integration each time a new client appears.

What this changes for data exploration¶

Imagine you want to survey an unfamiliar dataset — say, "what does Taiwan's Tor relay footprint look like right now?" With only the raw API, the flow is roughly:

- Read Onionoo's documentation; find the right endpoints (

/details,/aggregate, and so on). - Write a script that combines a few queries, merges the JSON, and computes the statistics.

- Format the result into a readable table or chart.

With MCP wired in, it becomes:

- Ask the AI tool directly: "What does Taiwan's Tor relay footprint look like — running count, total bandwidth, top five ASNs?"

- The AI picks the tools, composes the queries, and assembles a readable report (and, with some luck, sprinkles in context — for instance noting that TANet is Taiwan's academic network).

This is especially useful for early-stage research and data exploration: you do not need to learn the dataset's schema before you can start asking questions. The AI does the first pass of querying and summarizing for you, and you decide where to dig deeper after reading its report. When some queries need to be repeated or wired into a formal analysis, the API is still there — the two paths coexist.

Why this service¶

Onionoo's specification is solid, but it ships without an OpenAPI description, and its field names are short (optimized for transfer size). That works for a human writing a client by hand. It is less friendly to AI agents and third-party tooling:

- No OpenAPI means tools like Swagger UI, Postman, or code generators can't introspect it.

- The short field names confuse language models — is

ra relay or justrunning? - A single user question often needs several endpoints stitched together (

/details+/uptime+/bandwidth). Agents that re-derive that orchestration from scratch each time make mistakes.

onionoo-fastapi fixes all three: it ships an OpenAPI spec, exposes readable field names, and bundles common multi-endpoint tasks into single MCP tools (for example, "give me the health of this relay" is one call).

How to use it¶

1. As an MCP server (recommended for AI-agent users)¶

In Claude Desktop, Cursor, or any MCP-capable client, add this to the mcpServers block of your config:

{

"mcpServers": {

"onionoo": {

"type": "http",

"url": "https://onionoo.anoni.net/mcp"

}

}

}

Save, restart the client, and an onionoo tool group appears in the tool list. From there you can ask the agent things like:

- "Find the Tor relay named

moria1and report its status and country." - "List the top 10 Taiwanese (TW) relays by consensus weight."

- "Compare the two fingerprints

9695DFC35FFEB861329B9F1AB04C46397020CE31and847B1F850344D7876491A54892F904934E4EB85D— versions and flags." - "Give me Taiwan's current Tor footprint: running relay count, total bandwidth, flag distribution."

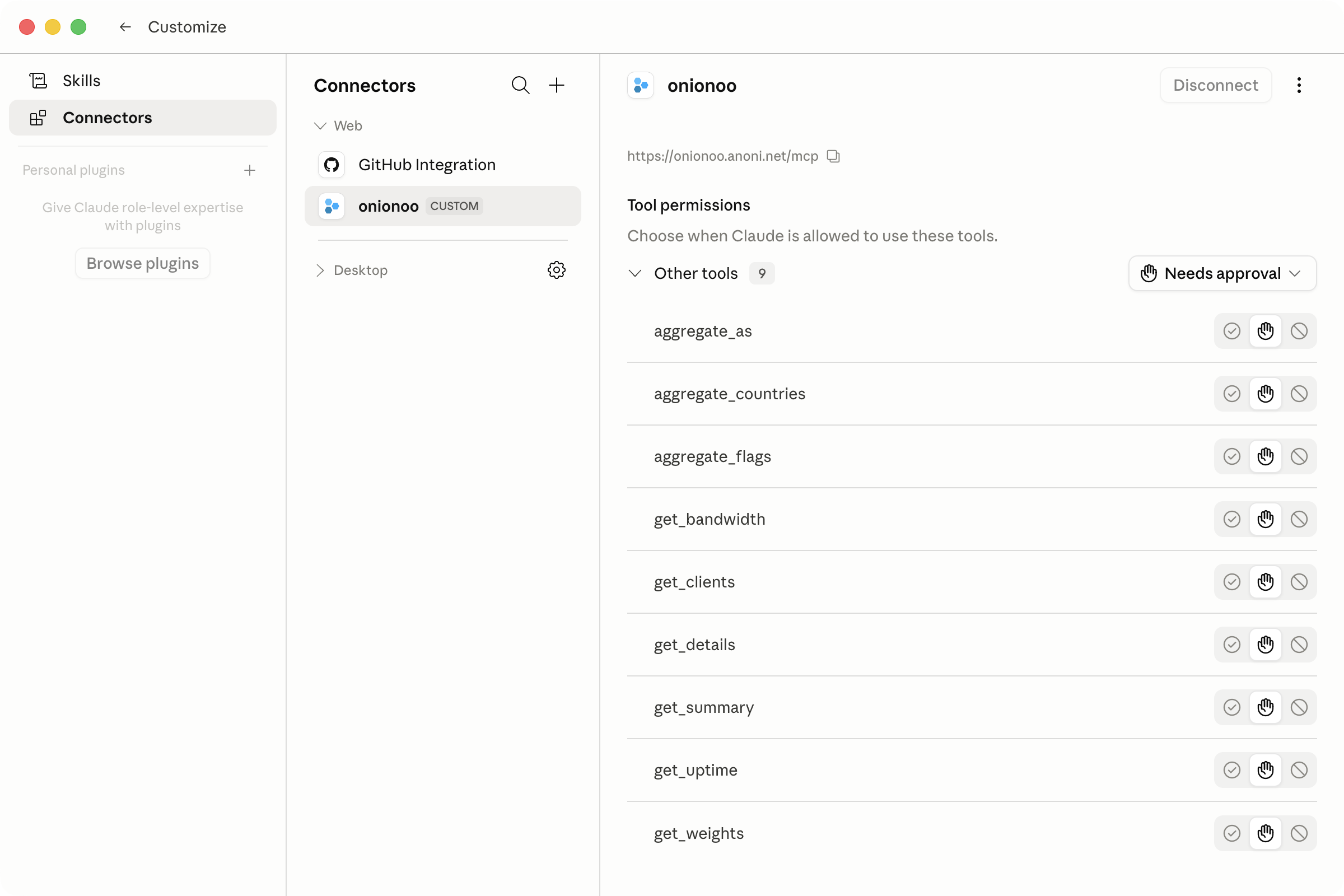

onionoo with nine tools — the six low-level endpoints plus three aggregates — all exposed through the Streamable HTTP transport. Each tool's approval requirement can be tuned per use case.To run it locally without depending on the hosted instance, use the stdio transport:

{

"mcpServers": {

"onionoo": {

"command": "uvx",

"args": ["--from", "git+https://github.com/anoni-net/onionoo-fastapi", "onionoo-mcp"]

}

}

}

You will need uv installed (brew install uv on macOS; see the official docs for Linux).

2. As an HTTP API (recommended for programmatic clients)¶

Every endpoint returns semantic JSON with a _meta envelope indicating cache state:

# Details for moria1 — only return nickname and fingerprint

curl -s 'https://onionoo.anoni.net/v1/details?search=moria&fields=nickname,fingerprint' | jq .

# Taiwan's relays, sorted by consensus weight

curl -s 'https://onionoo.anoni.net/v1/details?country=tw&running=true&order=-consensus_weight&limit=5' | jq .

# Per-country aggregation of currently running relays

curl -s 'https://onionoo.anoni.net/v1/aggregate/countries?running=true' | jq .

For the full list of endpoints, parameters, and response fields, see the Swagger UI. Query parameters mirror Onionoo's protocol specification.

3. Self-host with Docker¶

If you want to run your own copy (for a .onion service, an internal network, or experimentation):

git clone https://github.com/anoni-net/onionoo-fastapi

cd onionoo-fastapi

docker compose up -d --build

It listens on port 8000 by default. OpenAPI docs are at http://localhost:8000/docs, the MCP endpoint at http://localhost:8000/mcp.

Common settings (via environment variables):

| Variable | Purpose | Default |

|---|---|---|

ONIONOO_BASE_URL |

Upstream Onionoo URL | https://onionoo.torproject.org |

CACHE_MAXSIZE / CACHE_DEFAULT_TTL_SECONDS |

In-memory cache size and TTL | 1024 / 300 s |

RATE_LIMIT_ENABLED / RATE_LIMIT_PER_MINUTE |

Per-IP rate limiting | false / 120 |

CORS_ALLOWED_ORIGINS |

Allowed CORS origins | empty (CORS off) |

LOG_FORMAT |

json or console |

json |

METRICS_ENABLED |

Expose /metrics in Prometheus format |

true |

For the full list, see the README.

MCP tools at a glance¶

Task-oriented tools (stdio transport, recommended for agents)¶

| Tool | Purpose |

|---|---|

find_relay(query) |

Free-form lookup; auto-detects whether the query is a 40-character fingerprint, an AS number, an IP, or a nickname substring |

get_relay_health(fingerprint) |

A composite health snapshot — details + uptime + bandwidth in one call |

top_relays_by_bandwidth(country?, flag?, limit) |

Top-N relays by consensus weight, optionally filtered by country or flag |

compare_relays(fingerprints) |

Fetches details for several fingerprints in parallel for side-by-side comparison |

country_summary(country) |

Running count, total bandwidth, and flag distribution for one country |

aggregate_relays(group_by, running, top) |

Server-side group-by over country / AS / flag |

Low-level pass-through (both transports)¶

get_summary / get_details / get_bandwidth / get_weights / get_clients / get_uptime: thin wrappers over the corresponding Onionoo endpoints. Each takes a params dict and returns the semantically renamed JSON.

The Streamable HTTP endpoint at

/mcpexposes the six low-level wrappers plus three aggregate tools (countries, as, flags). The task-oriented tools and the unifiedaggregate_relayslive on the stdio transport. The two transports can run side by side.

Example: surveying Taiwan's Tor footprint with an agent¶

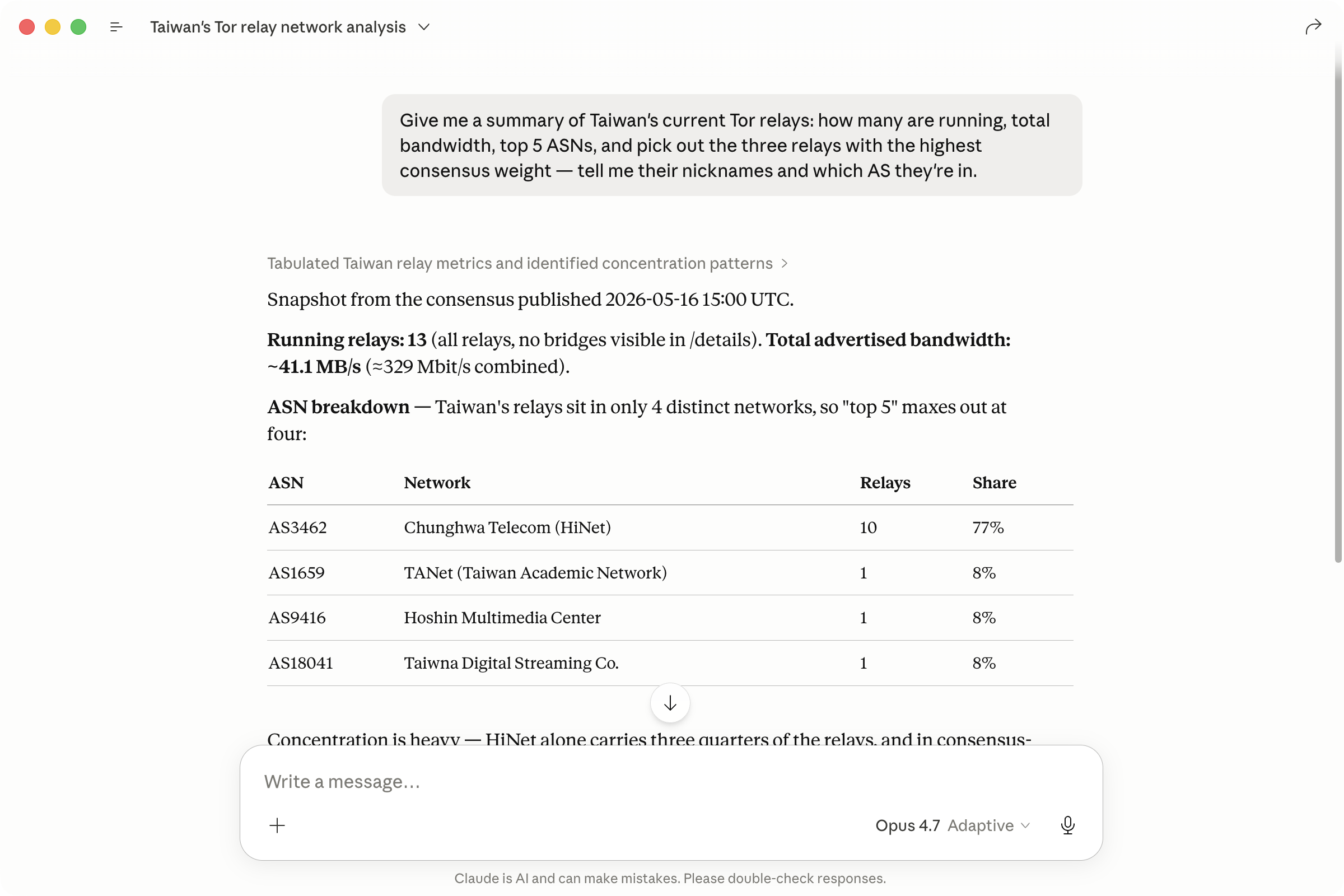

Once onionoo MCP is wired into Claude Desktop or Claude Code, you can ask:

"Give me a summary of Taiwan's current Tor relays: how many are running, total bandwidth, top 5 ASNs, and pick out the three relays with the highest consensus weight — tell me their nicknames and which AS they're in."

The agent breaks the question into a handful of MCP tool calls (over the HTTP transport, that's typically aggregate_countries plus get_details; over stdio, the task-oriented tools country_summary, aggregate_relays, and top_relays_by_bandwidth are available too) and assembles a single report. Queries like this previously meant manually composing Onionoo parameters and merging JSON. Now they are a single sentence.

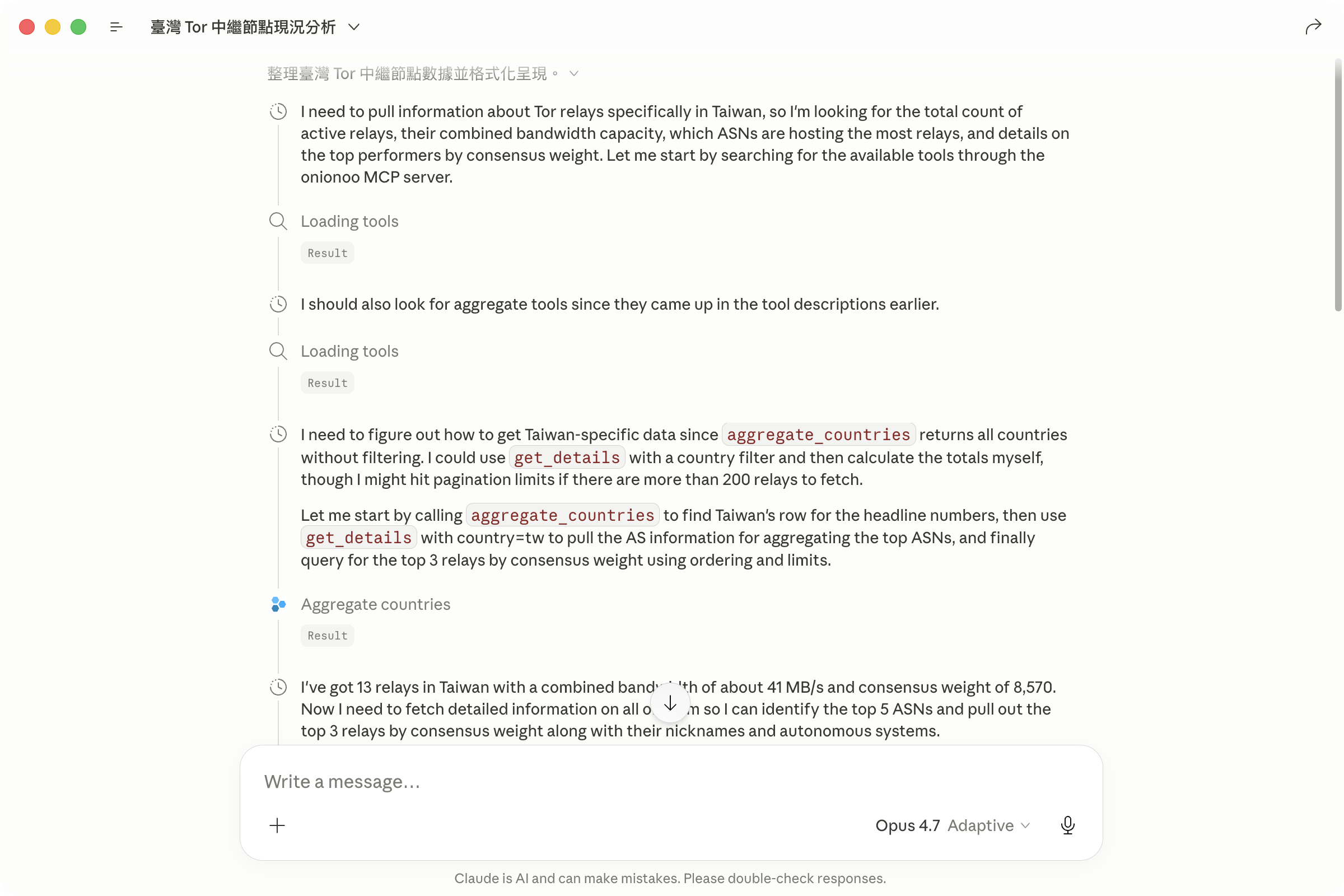

Expanding the model's reasoning shows it asking the MCP server which tools are available, then planning which ones to combine:

aggregate_countries and get_details tool calls inlined. The full MCP interaction is visible, which makes debugging and prompt tuning much easier.Observability and operations¶

/healthz: static liveness check, never hits upstream./healthz/ready: pings Onionoo (cached) — 200 if reachable, 503 otherwise./metrics: Prometheus format. Includes cache hit/miss counters (onionoo_cache_hits_totaland_misses_total), upstream latency (onionoo_upstream_seconds), and error rates.- Every request gets an

X-Request-IDechoed in the response header and bound into log records — handy for correlating issues.

Get involved¶

- Report bugs or suggest tools: https://github.com/anoni-net/onionoo-fastapi/issues

- Want to run your own Tor relay? See the Tor Relays Observatory for the data we publish on relays in the region.

- Background reading: the About page covers governance and how the wider site fits together; the Regional Observatory is where this service's outputs feed back into public analysis.

Released as v1.0.0 under MIT. Issues, PRs, and Matrix discussion on which task-oriented tools to add next are all welcome.